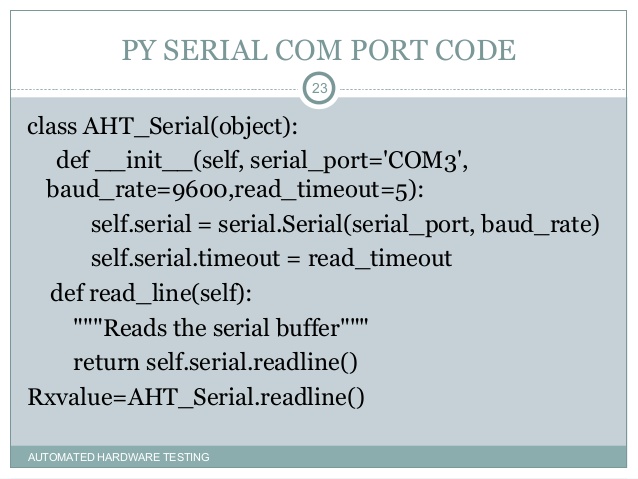

If the program is having trouble keeping up with # incoming serial data, increase the buffer size. BUFFER_SIZE = 5 # This is the number of data streams to plot (should be one less than columns). NUM_OF_LINES = 3 # X-Axis Range is the size of the x-limits to keep on screen as the chart # scrolls. Read(): This method reads the size of bytes from serial port input buffer. Readline(): This method reads serial port data down until a ' n' (newline) character is observed, then returns back a string.

This page describes exactly what Python definitions the protocol buffer compiler generates for any given protocol definition. Any differences between proto2 and proto3 generated code are highlighted - note that these differences are in the generated code as described in this document, not the base message classes/interfaces, which are the same in both versions. You should read the proto2 language guide and/or proto3 language guide before reading this document.

The Python Protocol Buffers implementation is a little different from C++ and Java. In Python, the compiler only outputs code to build descriptors for the generated classes, and a Python metaclass does the real work. This document describes what you get after the metaclass has been applied.

Compiler Invocation

The protocol buffer compiler produces Python output when invoked with the

--python_out= command-line flag. The parameter to the --python_out= option is the directory where you want the compiler to write your Python output. The compiler creates a .py file for each .proto file input. The names of the output files are computed by taking the name of the .proto file and making two changes:- The extension (

.proto) is replaced with_pb2.py. - The proto path (specified with the

--proto_path=or-Icommand-line flag) is replaced with the output path (specified with the--python_out=flag).

So, for example, let's say you invoke the compiler as follows:

The compiler will read the files

src/foo.proto and src/bar/baz.proto and produce two output files: build/gen/foo_pb2.py and build/gen/bar/baz_pb2.py. The compiler will automatically create the directory build/gen/bar if necessary, but it will not create build or build/gen; they must already exist.Note that if the

.proto file or its path contains any characters which cannot be used in Python module names (for example, hyphens), they will be replaced with underscores. So, the file foo-bar.proto becomes the Python file foo_bar_pb2.py.When outputting Python code, the protocol buffer compiler's ability to output directly to ZIP archives is particularly convenient, as the Python interpreter is able to read directly from these archives if placed in the

PYTHONPATH. To output to a ZIP file, simply provide an output location ending in .zip.The number 2 in the extension

_pb2.py designates version 2 of Protocol Buffers. Version 1 was used primarily inside Google, though you might be able to find parts of it included in other Python code that was released before Protocol Buffers. Since version 2 of Python Protocol Buffers has a completely different interface, and since Python does not have compile-time type checking to catch mistakes, we chose to make the version number be a prominent part of generated Python file names. Currently both proto2 and proto3 use _pb2.py for their generated files.Packages

The Python code generated by the protocol buffer compiler is completely unaffected by the package name defined in the

.proto file. Instead, Python packages are identified by directory structure.Messages

Given a simple message declaration:

The protocol buffer compiler generates a class called

Foo, which subclasses google.protobuf.Message. The class is a concrete class; no abstract methods are left unimplemented. Unlike C++ and Java, Python generated code is unaffected by the optimize_for option in the .proto file; in effect, all Python code is optimized for code size.If the message's name is a Python keyword, then its class will only be accessible via

getattr(), as described in the Names which conflict with Python keywords section.You should not create your own

Foo subclasses. Generated classes are not designed for subclassing and may lead to 'fragile base class' problems. Besides, implementation inheritance is bad design.Python message classes have no particular public members other than those defined by the

Message interface and those generated for nested fields, messages, and enum types (described below). Message provides methods you can use to check, manipulate, read, or write the entire message, including parsing from and serializing to binary strings. In addition to these methods, the Foo class defines the following static methods:FromString(s): Returns a new message instance deserialized from the given string.

Note that you can also use the

text_format module to work with protocol messages in text format: for example, the Merge() method lets you merge an ASCII representation of a message into an existing message.A message can be declared inside another message. For example:

message Foo { message Bar { }}In this case, the

Bar class is declared as a static member of Foo, so you can refer to it as Foo.Bar.Well Known Types

Protocol buffers provides a number of well-known types that you can use in your .proto files along with your own message types. Some WKT messages have special methods in addition to the usual protocol buffer message methods, as they subclass both

google.protobuf.Message and a WKT class.Any

For Any messages, you can call

Pack() to pack a specified message into the current Any message, or Unpack() to unpack the current Any message into a specified message. For example:You can also call the

Is() method to check if the Any message represents the given protocol buffer type. For example:Timestamp

Timestamp messages can be converted to/from RFC 3339 date string format (JSON string) using the

ToJsonString()/FromJsonString() methods. For example:You can also call

GetCurrentTime() to fill the Timestamp message with current time:To convert between other time units since epoch, you can call

ToNanoseconds(), FromNanoseconds(), ToMicroseconds(), FromMicroseconds() ,ToMilliseconds(), FromMilliseconds(), ToSeconds(), or FromSeconds(). The generated code also has ToDatetime() and FromDatetime() methods to convert between Python datetime objects and Timestamps. For example:Duration

Duration messages have the same methods as Timestamp to convert between JSON string and other time units. To convert between timedelta and Duration, you can call

ToTimedelta() or FromTimedelta. For example:FieldMask

FieldMask messages can be converted to/from JSON string using the

ToJsonString()/FromJsonString() methods. In addition, a FieldMask message has the folllowing methods:IsValidForDescriptor: Checks whether the FieldMask is valid for Message Descriptor.AllFieldsFromDescriptor: Gets all direct fields of Message Descriptor to FieldMask.CanonicalFormFromMask: Converts a FieldMask to the canonical form.Union: Merges two FieldMasks into this FieldMask.Intersect: Intersects two FieldMasks into this FieldMask.MergeMessage: Merges fields specified in FieldMask from source to destination.

Struct

Struct messages let you get and set the items directly. For example:

To get or create a list/struct, you can call

get_or_create_list()/get_or_create_struct(). For example:ListValue

A ListValue message acts like a Python sequence that lets you do the following:

To add a ListValue/Struct, call

add_list()/add_struct(). For example:Fields

For each field in a message type, the corresponding class has a property with the same name as the field. How you can manipulate the property depends on its type.

As well as a property, the compiler generates an integer constant for each field containing its field number. The constant name is the field name converted to upper-case followed by

_FIELD_NUMBER. For example, given the field optional int32 foo_bar = 5;, the compiler will generate the constant FOO_BAR_FIELD_NUMBER = 5.If the field's name is a Python keyword, then its property will only be accessible via

getattr() and setattr(), as described in the Names which conflict with Python keywords section.Singular Fields (proto2)

If you have a singular (optional or required) field

foo of any non-message type, you can manipulate the field foo as if it were a regular field. For example, if foo's type is int32, you can say:Note that setting

foo to a value of the wrong type will raise a TypeError.If

foo is read when it is not set, its value is the default value for that field. To check if foo is set, or to clear the value of foo, you must call the HasField() or ClearField() methods of the Message interface. For example:Singular Fields (proto3)

If you have a singular field

foo of any non-message type, you can manipulate the field foo as if it were a regular field. For example, if foo's type is int32, you can say:Note that setting

foo to a value of the wrong type will raise a TypeError.If

foo is read when it is not set, its value is the default value for that field. To clear the value of foo and reset it to the default value for its type, you call the ClearField() method of the Message interface. For example:Unlike in proto2, you cannot call

HasField() for a singular non-message field in proto3, and the library will throw an exception if you try to do this.Singular Message Fields

Message types work slightly differently. You cannot assign a value to an embedded message field. Instead, assigning a value to any field within the child message implies setting the message field in the parent. In proto3, you can also use the parent message's

HasField() method to check if a message type field value has been set, which you can't do with other types of proto3 singular field.So, for example, let's say you have the following

.proto definition:You cannot do the following:

Instead, to set

bar, you simply assign a value directly to a field within bar, and - presto! - foo has a bar field:Similarly, you can set

bar using the Message interface's CopyFrom() method. This copies all the values from another message of the same type as bar.Note that simply reading a field inside

bar does not set the field:If you need the 'has' bit on a message that does not have any fields you can or want to set, you may use the

SetInParent() method.Repeated Fields

Repeated fields are represented as an object that acts like a Python sequence. As with embedded messages, you cannot assign the field directly, but you can manipulate it. For example, given this message definition:

You can do the following:

The

ClearField() method of the Message interface works in addition to using Python del.Repeated Message Fields

Repeated messages works similar to repeated scalar fields, except the corresponding Python object does not have an

append() function. Instead, it has an add() function that creates a new message object, appends it to the list, and returns it for the caller to fill in. It also has an extend() function that appends an entire list of messages, but makes a copy of every message in the list. This is done so that messages are always owned by the parent message to avoid circular references and other confusion that can happen when a mutable data structure has multiple owners.For example, given this message definition:

You can do the following:

Groups (proto2)

Note that groups are deprecated and should not be used when creating new message types – use nested message types instead.

A group combines a nested message type and a field into a single declaration, and uses a different wire format for the message. The generated message has the same name as the group. The generated field's name is the lowercased name of the group.

For example, except for wire format, the following two message definitions are equivalent:

A group is either

required, optional, or repeated. A required or optional group is manipulated using the same API as a regular singular message field. A repeated group is manipulated using the same API as a regular repeated message field.For example, given the above

SearchResponse definition, you can do the following:Map Fields

Given this message definition:

The generated Python API for the map field is just like a Python

dict:As with embedded message fields, messages cannot be directly assigned into a map value. Instead, to add a message as a map value you reference an undefined key, which constructs and returns a new submessage:

You can find out more about undefined keys in the next section.

Referencing undefined keys

The semantics of Protocol Buffer maps behave slightly differently to Python

dicts when it comes to undefined keys. In a regular Python dict, referencing an undefined key raises a KeyError exception:However, in Protocol Buffers maps, referencing an undefined key creates the key in the map with a zero/false/empty value. This behavior is more like the Python standard library

defaultdict.This behavior is especially convenient for maps with message type values, because you can directly update the fields of the returned message.

Note that even if you don't assign any values to message fields, the submessage is still created in the map:

This is different from regular embedded message fields, where the message itself is only created once you assign a value to one of its fields.

As it may not be immediately obvious to anyone reading your code that

m.message_map[10] alone, for example, may create a submessage, we also provide a get_or_create() method that does the same thing but whose name makes the possible message creation more explicit:Enumerations

In Python, enums are just integers. A set of integral constants are defined corresponding to the enum's defined values. For example, given:

The constants

VALUE_A, VALUE_B, and VALUE_C are defined with values 0, 5, and 1234, respectively. You can access SomeEnum if desired. If an enum is defined in the outer scope, the values are module constants; if it is defined within a message (like above), they become static members of that message class.For example, you can access the values in the three following ways for the following enum in a proto:

An enum field works just like a scalar field.

If the enum's name (or an enum value) is a Python keyword, then its object (or the enum value's property) will only be accessible via

getattr(), as described in the Names which conflict with Python keywords section.The values you can set in an enum depend on your protocol buffers version:

- In proto2, an enum cannot contain a numeric value other than those defined for the enum type. If you assign a value that is not in the enum, the generated code will throw an exception.

- proto3 uses open enum semantics: enum fields can contain any

int32value.

Enums have a number of utility methods for getting field names from values and vice versa, lists of fields, and so on - these are defined in

enum_type_wrapper.EnumTypeWrapper (the base class for generated enum classes). So, for example, if you have the following standalone enum in myproto.proto:..you can do this:For an enum declared within a protocol message, such as Foo above, the syntax is similar:If multiple enum constants have the same value (aliases), the first constant defined is returned.In the above example,

Foo_Name(5)code> returns 'VALUE_B'.Oneof

Given a message with a oneof:

The Python class corresponding to

Foo will have properties called name and serial_number just like regular fields. However, unlike regular fields, at most one of the fields in a oneof can be set at a time, which is ensured by the runtime. For example:The message class also has a

WhichOneof method that lets you find out which field (if any) in the oneof has been set. This method returns the name of the field that is set, or None if nothing has been set:HasField and ClearField also accept oneof names in addition to field names:Note that calling

ClearField on a oneof just clears the currently set field.Names which conflict with Python keywords

If the name of a message, field, enum, or enum value is a Python keyword, then the name of its corresponding class or property will be the same, but you'll only be able to access it using Python's

getattr() and setattr() built-in functions, and not via Python's normal attribute reference syntax (i.e. the dot operator).For example, if you have the following

.proto definition:You would access those fields like this:

By contrast, trying to use

obj.attr syntax to access these fields results in Python raising syntax errors when parsing your code:Extensions (proto2 only)

Given a message with an extension range:

The Python class corresponding to

Foo will have a member called Extensions, which is a dictionary mapping extension identifiers to their current values.Given an extension definition:

The protocol buffer compiler generates an 'extension identifier' called

bar. The identifier acts as a key to the Extensions dictionary. The result of looking up a value in this dictionary is exactly the same as if you accessed a normal field of the same type. So, given the above example, you could do:Note that you need to specify the extension identifier constant, not just a string name: this is because it's possible for multiple extensions with the same name to be specified in different scopes.

Analogous to normal fields,

Extensions[..] returns a message object for singular messages and a sequence for repeated fields.The

Message interface's HasField() and ClearField() methods do not work with extensions; you must use HasExtension() and ClearExtension() instead.Python File Buffer

Services

If the

.proto file contains the following line:Then the protocol buffer compiler will generate code based on the servicedefinitions found in the file as described in this section. However, thegenerated code may be undesirable as it is not tied to any particular RPCsystem, and thus requires more levels of indirection that code tailored toone system. If you do NOT want this code to be generated, add this line to thefile:

If neither of the above lines are given,the option defaults to

false, as generic services are deprecated.(Note that prior to 2.4.0, the option defaults to true)RPC systems based on

.proto-language service definitions shouldprovide plugins togenerate code appropriate for the system. These plugins are likely to requirethat abstract services are disabled, so that they can generate their ownclasses of the same names. Plugins are new in version 2.3.0 (January 2010).The remainder of this section describes what the protocol buffer compilergenerates when abstract services are enabled.

Interface

Given a service definition:

The protocol buffer compiler will generate a class

Foo to represent this service. Foo will have a method for each method defined in the service definition. In this case, the method Bar is defined as:The parameters are equivalent to the parameters of

Service.CallMethod(), except that the method_descriptor argument is implied.These generated methods are intended to be overridden by subclasses. The default implementations simply call

controller.SetFailed() with an error message indicating that the method is unimplemented, then invoke the done callback. When implementing your own service, you must subclass this generated service and implement its methods as appropriate.Foo subclasses the Service interface. The protocol buffer compiler automatically generates implementations of the methods of Service as follows:GetDescriptor: Returns the service'sServiceDescriptor.CallMethod: Determines which method is being called based on the provided method descriptor and calls it directly.GetRequestClassandGetResponseClass: Returns the class of the request or response of the correct type for the given method.

Python Serial Input Buffer Size

Stub

The protocol buffer compiler also generates a 'stub' implementation of every service interface, which is used by clients wishing to send requests to servers implementing the service. For the

Foo service (above), the stub implementation Foo_Stub will be defined.Foo_Stub is a subclass of Foo. Its constructor takes an RpcChannel as a parameter. The stub then implements each of the service's methods by calling the channel's CallMethod() method.The Protocol Buffer library does not include an RPC implementation. However, it includes all of the tools you need to hook up a generated service class to any arbitrary RPC implementation of your choice. You need only provide implementations of

RpcChannel and RpcController.Plugin Insertion Points

Code generator plugins which want to extend the output of the Python code generator may insert code of the following types using the given insertion point names.

imports: Import statements.module_scope: Top-level declarations.class_scope:TYPENAME: Member declarations that belong in a message class.TYPENAMEis the full proto name, e.g.package.MessageType.

Do not generate code which relies on private class members declared by the standard code generator, as these implementation details may change in future versions of Protocol Buffers.

C++ Implementation

There is also a C++ implementation forPython messages via a Python extension for better performance. Implementationtype is controlled by an environment variable

PROTOCOL_BUFFERS_PYTHON_IMPLEMENTATION(valid values: 'cpp' and 'python'). The default value is currently 'python' butwill be changed to 'cpp' in future release.Note that the environment variable needs to be set before installing theprotobuf library, in order to build and install the python extension. The C++implementation also requires CPython platforms. See

python/INSTALL.txt for detailed install instructions. This is a step-by-step guide to using the serial port from a program running under Linux; it was written for the Raspberry Pi serial port with the RaspbianWheezy distribution. However, the same code should work on other systems.

- 2Step 1: Connect to a terminal emulator using a PC

- 3Step 2: Test with Python and a terminal emulator

Step 0: Note whether your Raspberry Pi has Wireless/Bluetooth capability

By default the Raspberry Pi 3 and Raspberry Pi Zero W devices use the more capable /dev/ttyACM0 to communicate over bluetooth, so if you want to program the serial port to control the IO pins on the header, you should use the auxiliary UART device /dev/ttyS0 instead. On these wireless devices, it is possible switch the GPIO serial port back to /dev/ACM0 with `/boot/config.txt` directives by disabling bluetooth with `bdtoverlay=`pi3-disable-bt` or by forcing the bluetooth to use the mini-UART with `dtoverlay=pi3-miniuart-bt`. See https://www.raspberrypi.org/documentation/configuration/uart.md for details.

Step 1: Connect to a terminal emulator using a PC

Follow the instructions at RPi_Serial_Connection#Connections_and_signal_levels, and RPi_Serial_Connection#Connection_to_a_PC, so that you end up with your Pi's serial port connected to a PC, running a terminal emulator such as minicom or PuTTY.

The default Wheezy installation sends console messages to the serial port as it boots, and runs getty so you can log in using the terminal emulator. If you can do this, the serial port hardware is working.

Troubleshooting

| Problem | Possible causes |

|---|---|

| Nothing at all shown on terminal emulator | Connected to wrong pins on GPIO header Faulty USB-serial cable or level shifter /boot/cmdline.txt and /etc/inittab have already been edited (see below) Flow control turned on in terminal emulator Wrong baud rate in terminal emulator |

| Text appears corrupted | Wrong baud rate, parity, or data settings in terminal emulator Faulty level shifter |

| Can receive but not send (nothing happens when you type) | Connected to wrong pins on GPIO header Flow control turned on in terminal emulator Faulty level shifter |

Step 2: Test with Python and a terminal emulator

You will now need to edit files /etc/inittab and /boot/cmdline.txt as described at RPi_Serial_Connection#Preventing_Linux_using_the_serial_port. When you have done this - remember to reboot after editing - the terminal emulator set up in Step 1 will no longer show any output from Linux - it is now free for use by programs. Leave the terminal emulator connected and running throughout this step.

We will now write a simple Python program which we can talk to with the terminal emulator. You will need to install the PySerial package:

Now, on the Raspberry Pi, type the following code into a text editor, taking care to get the indentation correct (Note that for devices with wireless (3, zero W) you must use /dev/ttyS0 instead of /dev/ttyAMA0):

Save the result as file serialtest.py, and then run it with:

If all is working, you should see the following lines appearing repeatedly, one every 3 seconds, on the terminal emulator:

Try typing some characters in the terminal emulator window. You will not see the characters you type appear straight away - instead you will see something like this:

If you typed Enter in the terminal emulator, it will appear as the character sequence r - this is Python's way of representing the ASCII 'CR' (Control-M) character.

Troubleshooting

| Problem | Possible causes |

|---|---|

| Python error 'No module named serial' | python-serial package not installed |

| Python error '[Errno 13] Permission denied: '/dev/ttyAMA0' | User not in group 'dialout' getty is still running (from /etc/inittab) /dev/ttyAMA0 doesn't have rw access by group 'dialout' |

For other problems (e.g. text appears corrupted) refer to the troubleshooting table in Step 1.

More about reading serial input

The serial connection we are using above is:

- bi-directional - the PC transmits characters (actually, 8-bit values which are interpreted as ASCII characters) which are received by the Pi, and the Pi can transmit characters which are received by the PC.

- full-duplex - meaning that the PC-to-Pi transmission can happen at the same time as the Pi-to-PC transmission

- byte-oriented - each byte is transmitted and received independently of the next byte. In other words, the serial communication does not group transmitted data into packets, or lines of text; if you want to send messages longer than one byte, you will need to add your own means of grouping bytes together.

So, the line rcv = port.read(10) will wait for characters to arrive from the PC, and:

- if it has read 10 characters, the call to read() will finish, returning those 10 characters as a string.

- if it has been waiting for the timeout period given to serial.Serial() - in this case, 3 seconds - it will return whatever characters have arrived so far. (If no characters arrive, this will return an empty string).

Any characters which arrive after the read() call has finished will be saved (buffered) by the kernel and can be retrieved the next time you call read(). However, there is a limit to how many characters can be saved; once the buffer is full characters will be lost.

This means that a call such as port.read(10) is only useful if you know the transmitting end is going to send exactly 10 bytes of data; if it sends more that 10, they will not be returned from the call, and if it sends fewer than 10, your program will pause until the timeout has expired.

Using the readline() call

If you are reading data from the serial port organised as (possibly variable-length) lines of text, you may want to use PySerial's readline() method. To see the effect of this, replace the rcv = port.read(10) with rcv = port.readline() in the serialtest.py program above.

Serial Buffer Ic

The documentation for readline() says it will receive characters from the serial port until a newline (Python 'n') character is received; when it gets one it returns the line of text it has got so far. You may expect, therefore, that typing a few characters on the terminal emulator, followed by the Enter key, will return those characters immediately.

However, on many terminal emulators this won't work! Pressing the Enter key sends an ASCII CR (13 decimal, Control-M) character, but a newline character is ASCII LF (10 decimal, Control-J). So, readline() will not finish until you type Control-J in the terminal (or until the timeout has expired).

PySerial's documentation gives various means of reading other newline characters; however, the most reliable solution is to do it yourself:

Retrieved from 'https://elinux.org/index.php?title=Serial_port_programming&oldid=489886'